- The example (exampledag.py file) above just has 2 tasks, but if you have 10 or more then the redundancy becomes more evident.To avoid this you can use Airflow DAGs as context managers to.

- Triggered DAG example with workflow broken down into three layers in series. In order to execute this version of the flow from within Apache Airflow, only the initial job is executed.

- In Airflow, a DAG – or a Directed Acyclic Graph – is a collection of all the tasks you want to run, organized in a way that reflects their relationships and dependencies. A DAG is defined in a Python script, which represents the DAGs structure (tasks and their dependencies) as code.

- Bases: airflow.dag.basedag.BaseDag, airflow.utils.log.loggingmixin.LoggingMixin. A dag (directed acyclic graph) is a collection of tasks with directional dependencies. A dag also has a schedule, a start date and an end date (optional). For each schedule, (say daily or hourly), the DAG needs to run each individual tasks as their dependencies.

- Airflow Dag Schedule_interval

- Airflow Dag Api

- Airflow Dag Testing

- Airflow Dag Schedule_interval

- Airflow Dag Parameters

- Airflow Dag Cycle

Running airflow backfill (suggested here Airflow 'This DAG isnt available in the webserver DagBag object ') running airflow triggerdag; The scheduler log shows it being processed and no errors occurring, I can interact with it and view it's state through the CLI, but it still does not appear in the web UI.

We will learn how to write our first DAG step by step

Steps to write an Airflow DAG

A DAG file, which is basically just a Python script, is a configuration file specifying the DAG’s structure as code.

There are only 5 steps you need to remember to write an Airflow DAG or workflow:

- Step 1: Importing modules

- Step 2: Default Arguments

- Step 3: Instantiate a DAG

- Step 4: Tasks

- Step 5: Setting up Dependencies

Step 1: Importing modules

Import Python dependencies needed for the workflow

Step 2: Default Arguments

Define default and DAG-specific arguments

Step 3: Instantiate a DAG

Give the DAG name, configure the schedule, and set the DAG settings

Here is a couple of options you can use for your

schedule_interval. You can choose to use some preset argument or cron-like argument:| preset | meaning | cron |

|---|---|---|

None | Don’t schedule, use for exclusively “externally triggered” DAGs | |

@once | Schedule once and only once | |

@hourly | Run once an hour at the beginning of the hour | 0 * * * * |

@daily | Run once a day at midnight | 0 0 * * * |

@weekly | Run once a week at midnight on Sunday morning | 0 0 * * 0 |

@monthly | Run once a month at midnight of the first day of the month | 0 0 1 * * |

@yearly | Run once a year at midnight of January 1 | 0 0 1 1 * |

Example usage:

- Daily schedule:

schedule_interval='@daily'schedule_interval='0 0 * * *'

Step 4: Tasks

The next step is to lay out all the tasks in the workflow.

Step 5: Setting up Dependencies

- Set the dependencies or the order in which the tasks should be executed.

- Here are a few ways you can define dependencies between them:

Recap

- Basically, a DAG is just a Python file, which is used to organize tasks and set their execution context. DAGs do not perform any actual computation.

- Instead, tasks are the element of Airflow that actually “do the work” we want to be performed. And it is your job to write the configuration and organize the tasks in specific orders to create a complete data pipeline.

- Your final DAG will look like this:

As workflows are being developed and built upon by different team members, they tend to get more complex.

Airflow Dag Schedule_interval

The first level of complexity can usually be handled by some sort of error messaging - throw an error notification to a particular person or group based on a workflow's failure.

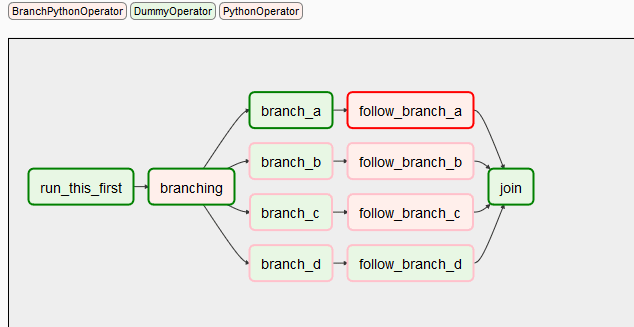

Branching can be helpful for performing conditional logic - execute a set of tasks based off of a condition. For situations where that is not enough - The TriggerDagRunOperator can be used to kick off entire DAGs.

Define a controller and a target DAG

The TriggerDagRunOperator needs a controller - a task that decides the outcome based on some condition, and a target, a DAG that is kicked off or not depending on the condition.

The controller task takes the form a python callable:

If the

dag_run_obj is returned, the target DAG can will be triggered. The dag_run_obj can also be passed with context parameters.Airflow Dag Api

The

target DAG should always be set to None for its schedule - the DAG should only be triggered by an external condition.Use Cases

Trigger DAGs are a great way to separate the logic between a 'safety check' and the logic to execute in case those checks aren't accomplished.

These sorts of checks are a good fail safe to add to the end of a workflow, downstream of the data ingestion layer.

On the same note, they can be used to monitor Airflow itself.

Metadata Trigger DAGs.

Error notifications can be set through various levels through a DAG, but propogating whose between different DAGs can valuable for other reasons. Suppose that after 5 DAG failures, you wanted to trigger a systems check

Sensors and TriggerDAGs

Airflow on Airflow.

As Airflow operations are being scaled up, error reporting gets increasingly difficult. The more failure emails that are being sent out, the less each notification matters. Furthermore, a certain threshold of failures could indiciate a deeper issue in another system.

Using a Sensor and TriggerDag can provide a clean solution to this issue,

Checking the database for a threshold of failures.

Airflow Dag Testing

DagFailureSensor

A sensor can be used to check the metadatabase for the status of DagRuns. If the number of failed runs is above a certain threshold (different for each DAG), the next task can trigger a systems check DAG.

Airflow Dag Schedule_interval

The sensor can then be implemented as such:

Airflow Dag Parameters

Adding Trigger Rules

Receipts 1 9 3 – smart document collection. Depending on the rest of the infrastructure, different 'checks' may all trigger the same system level check.

Airflow Dag Cycle

If that is the case, TriggerDagOperators should be set with a different

trigger_rule